Neural Prism 2105318722 Hyper Beam

Neural Prism 2105318722 Hyper Beam proposes rapid, parallelized inference by dividing input data into streams handled by dedicated cores. The concept emphasizes throughput, specialized routines, and hardware acceleration, with speculative notes on quantum coherence as a potential boost. Real-world use spans imaging, communications, and materials analysis, but safety, governance, and skepticism remain central. The balance between speed and reliability is unsettled, and critical questions persist about verification and accountability as the approach advances. The next questions are waiting.

What Neural Prism 2105318722 Hyper Beam Is/Why It Matters

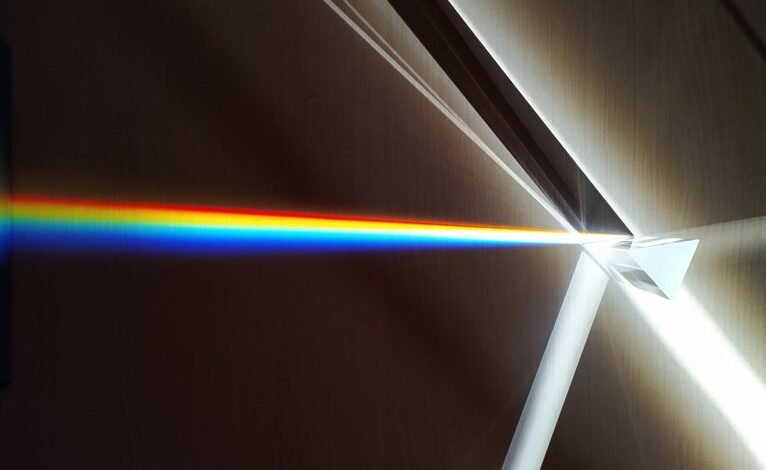

Neural Prism 2105318722 Hyper Beam refers to a proposed computational concept that combines prism-like data partitioning with high-intensity, multi-spectral signal processing to achieve rapid, parallelized inference.

The notion centers on a neural prism that promises acceleration through parallel cores and dedicated hardware.

Skeptics note ambiguous core algorithms and unproven hardware reliability, urging rigorous validation before adoption as foundational technology.

Neural prism, hyper beam; Core algorithms, hardware.

How the Hyper Beam Works: Core Algorithms and Hardware

The Hyper Beam operates by partitioning input data into parallel streams, each processed by dedicated cores that execute tightly scoped routines designed for rapid inference. The architecture emphasizes neural focus and hardware acceleration, prioritizing data throughput while skeptical of overclaims. Quantum coherence remains a speculative optimization, with results relying on careful calibration and verifiable benchmarks rather than theoretical promises alone.

Real-World Applications: Imaging, Communication, and Materials Analysis

Imaging, communications, and materials analysis benefit from the Hyper Beam’s ability to convert rapid inference into practical measurements and decisions. The technology promises faster insight, yet steadfast skepticism remains warranted about reliability and edge cases.

In practice, imaging ethics and materials safety must guide deployment, ensuring consistent data handling, transparent reporting, and rigorous risk assessment within open, freedom-respecting frameworks.

Safety, Ethics, and Responsible Use of the Hyper Beam

How should entities deploy the Hyper Beam to balance speed with safety and accountability? The discussion centers on governance, not zeal. Clear ethical guidelines inform deployment limits, transparency, and consent. Rigorous risk assessment accompanies every use, addressing misapplication and unintended consequences. Proportional safeguards ensure freedom while preventing harm, with monitoring, audits, and durable redress mechanisms. Skepticism remains essential for responsible adoption.

Conclusion

The Hyper Beam promises speed through parallel streams and concentrated routines, yet its merit hinges on verifiable benchmarks and robust safeguards. Its coincidence-driven visualization—data as mirrored threads weaving toward a shared insight—highlights both potential and risk: faster inference without compromising reliability. Skepticism remains warranted as quantum-coherence ideas enter speculative terrain. Real-world adoption must demand rigorous testing, transparent governance, and fail-safe controls to ensure that speed serves accountability rather than obscuring error.